Why CFD is Stuck in the File Era - and how Flexcompute will streamline the Digital Thread

Here's a confession: I'm not a CFD expert.

I can run CFD in a pinch — set up a mesh, submit a job, make the solver converge on a good day. But I really shouldn't. My expertise is flight mechanics: stability, control, loads, performance. Or propellers, or wind tunnel testing. Depends on the day. I'm the person who needs the CFD results, not the person who generates them.

And that's exactly why Flexcompute hired me.

They looked at the market and saw a gap. Not in solver capability — Flow360 is genuinely impressive. The gap is in what happens after the solver finishes: getting CFD results to the people who actually need to make decisions with them. The speed to insight is slower than the solver.

So they brought in someone from the other side of the fence. Someone who's spent years waiting for CFD data, chasing it through email threads, wondering which geometry file produced which results.

This is what I've learned.

The short version: CFD workflows are stuck in the file era - results disconnected from their geometry, provenance tracked in spreadsheets, certification evidence scattered across email threads. We're building Flexcompute Thread: artifact-native simulation where every result you would normally save to result.png becomes a traceable asset that knows where it came from, automatically. Jump to what we're building →

The Problem Everyone Knows But Nobody Talks About

If you've worked in aerospace for more than six months, you've seen this folder:

├── results_v3_final.dat

├── results_v3_final_FINAL.dat

├── results_v3_final_FINAL_fixed.dat

├── results_v3_final_FINAL_fixed_newmesh.dat

└── results_to_IGES_mappingDONTCHANGEME (4).txtYou've also seen the Excel spreadsheet. The one that's supposed to track which geometry version produced which results, who ran it, and what the reference conditions were. The one that "just gets painful to manage" (that's an actual quote from one of our customers that spurred me to write this blog.)

And you've likely had similar conversations to these:

The canonical question in computational aerospace isn't "how accurate is the solver?"...

...it's "which IGES does this .dat file belong to?"

In a previous life I was a police officer. The job taught me one thing: chain of custody is everything. Evidence without provenance is worthless — doesn't matter how compelling it looks. Engineering data works the same way. A Cm value is just a number until you can prove where it came from.

The Standards Already Know This

Aerospace standards have been saying this for years. We're just not listening.

NASA-STD-7009B (the standard for models and simulations, updated March 2024) puts it bluntly:

"The primary purpose of this Standard is to reduce the risks associated with M&S (Modelling and Simulation)-influenced decisions by establishing a basic set of best practices applicable to any M&S and a generic, flexible M&S life-cycle process that includes several formal assessments to support the communication of M&S-based results credibility."

In plain English: if you can't prove where your simulation data came from, your decisions based on it are risky. And "credibility" isn't just about solver accuracy — it's about the entire chain from geometry to results.

The UK Ministry of Defence's RA 5812 is even more explicit about what this means for certification:

"It is to be demonstrated that the M&S utilized in support of Airworthiness-related decision-making have been derived from a credible source and are appropriate for their intended use."

The nine required components? Data pedigree, verification and validation, uncertainty characterisation, change management processes, and methods for analysing results.

Everything that fragile Python scripts, Excel spreadsheets, and email threads conspicuously fail to provide.

The gap between what the standards require and what engineers actually have is enormous. And that gap is where certification risk lives.

Why CFD Vendors Don't Solve This

Here's the thing: CFD vendors sell solvers. That's their business. They optimise for speed, accuracy, scalability — things they can benchmark and put in a press release.

Data provenance? That's your problem.

"We gave you beautiful isocontours and Q-criterion renderings. What more do you want?"

I want to know which geometry produced which result. I want to understand what happens if I tweak the pylon — do I need to re-run everything, or just the affected cases? I want a response surface, not a single point on it. I want ∂CL/∂geometry, not another flythrough video.

But that's not what CFD vendors are optimising for. They optimise for the person who runs the simulation, not the person who uses the output. And those are often very different people.

I spent a little too long making this animation to show the context of a single CFD result as part of actual flight vehicle design, so I'd love you look through it:

Here's a conversation I had repeatedly as an S&C engineer. Aero would ask: "What control surface deflections should I run?" And my response was: "You tell me. If I knew where the aileron would stall, I wouldn't need your database." The whole point of the ADB is to discover those limits — but we're asked to specify them upfront. It's backwards.

The MLOps Analogy (and the Model T)

Machine learning figured this out years ago.

In the early days of ML, data scientists had the same problem. "Which model was this? Which dataset did I train it on? What hyperparameters? Why is production different from my laptop?" They were drowning in files, notebooks, and tribal knowledge.

Then MLOps happened. Tools like MLflow, Weights & Biases, Neptune. The core insight: experiments are artifacts, and lineage is automatic. You don't manually track which data produced which model — the system knows. Every experiment is connected to its inputs, its code, its parameters, its outputs. Reproducibility is built in, not bolted on.

There's an older analogy too. There's a line about "if I'd asked customers what they wanted, they would have said a faster horse" that's often misattributed to Henry Ford; but it's a good analogy.

Ask a CFD engineer what they want and they might ask for a faster solver. What's missing is Ford's assembly line — the workflow infrastructure that makes the whole system productive, not just the solver at its centre.

Why hasn't simulation caught up?

Partly because the CFD world is older and more conservative. Partly because the vendors have no incentive to solve this — they sell compute, not workflow. And partly because the problem is hard: it requires understanding both the physics domain and modern collaboration tooling.

To be fair, the big vendors have noticed the problem. ANSYS has Minerva. Siemens has Teamcenter Simulation. These are serious enterprise platforms for Simulation Process and Data Management (SPDM). They'll track your simulation files, manage versions, link your CAD BOM to your analysis BOM. For large organisations with dedicated PLM teams and six-figure budgets, they work for their intended purpose.

But they're fundamentally asset management systems — they track files and their relationships. They don't treat derived outputs as first-class entities. Make a plot comparing CL across 50 runs? That plot doesn't know where its data came from. Export data for a report? You're back to dead data. The lineage stops at the simulation output; everything downstream is your problem again.

What's needed isn't better file management. It's a different model entirely.

Artifact-Native Simulation

So here's the idea — it's simple once you see it.

Everything is an artifact. Geometry? Artifact. Mesh? Artifact. CFD run? Artifact. Plot? Artifact. Comparison between two configurations? Artifact (with two plots as parents).

Lineage is implicit. When you create a mesh, it knows which geometry it came from — automatically. When you run CFD, the results know which mesh, which solver settings, which boundary conditions. When you create a plot, it knows which results it's aggregating.

Results can't exist without their parent geometry. This is the key constraint. In a file-based world, you can have a results file with no connection to anything. In an artifact-native world, that's structurally impossible.

All attributes in a provenance chain can be viewed and plotted against one another. So you can see how Dutch Roll Natural Frequency varies with sweep angle even though the sweep attribute belongs to the geometry, and a linearised model of mulitple CFD results determined the Natural Frequency. But you can compare them in this framework because they're all chained to the same geometry and all attributes are flattened into a queryable database. (Admittedly, you'd need to filter those onto Mach/EAS or similar for the results to make sense - but that's a very simple query in this framework.)

It's a bit like the shift from procedural to object-oriented programming. Before: data and functions floating around, connected by convention and programmer discipline. After: everything is an object with defined relationships, and the compiler enforces them.

Same shift here — from files-and-folders to artifacts-with-lineage. The system enforces the relationships that humans used to (poorly) track manually.

The AIAA calls this the "Digital Thread" — maintaining "traceability throughout the development lifecycle" so you can trace "the routes taken from the earliest Assumptions to validated Knowledge." We're implementing it in a way that doesn't require a 6-month enterprise deployment and licenses that bankrupt companies.

It'll be done implicitly from Flow360 runs, and soon with all the other exciting simulation tools we're releasing (watch this space!) - and ultimately with results from others solvers, experiment, and empirical data like ESDU and Datcom.

The Boring Foundation

I'll be honest: none of this is flashy.

Provenance tracking doesn't make for exciting demos in the way that an isocontour sim does. "Look, every artifact knows its parent!" doesn't get people out of their seats. But - the flashy stuff that this will enable is adaptive sampling, ML surrogates, automated ADB generation, adjoint-based optimisation, and the list goes on.

You can't build the flashy stuff without the boring foundation which joins data together from inputs to the things we actually care about. You need to be able to chain and propagate design inputs into solver outputs and make all these available to multiple models without having to remember where the "process_results.py" file lives.

Adaptive sampling needs to know what you've already run. Surrogates need provenance to be trustworthy. Adjoints require the geometry → results chain to be explicit. Every exciting feature downstream depends on this unsexy plumbing being in place.

So that's what we're building first. The artifact model. The lineage graph. The plot anything vs anything framework. The boring-but-necessary infrastructure that makes everything else possible.

We're Building This — It's Called Thread

Flexcompute already has the solver (Flow360 — genuinely fast, I don't need to pitch it). What we're adding is the workflow layer: artifact-native, lineage-tracked, collaborative.

We're calling it Flexcompute Thread (for now) — the digital thread that connects everything. Geometry versioning with comments and diffs. Results that know where they came from. Plots that trace back through their entire lineage. Real-time collaboration (yes, Google Docs-style) so your team can work together instead of emailing files.

Flexcompute Thread is the assembly line for simulation. The infrastructure that makes the solver useful, not just fast.

We're not quite ready to release but we're far enough along that I can show you the artifact graph, the provenance view, the lineage breadcrumbs. The foundation here is real, not in a slide deck - and we're sharing with customers so it's easier to get this post out publicly now.

You can see how a plot knows* which geometry it came from, which CFD runs produced it - and as a consequence - all the solver settings accompanying those runs, the mesher settings, and all the geometry information:

*forgive the pathetic fallacy, it works here

How plots are related - one actually feeds into another, and how certain data sets don't feed into anything at all:

What This Unlocks

If you're a systems-thinker, then you'll hopefully be excited about the doors this opens up. Once you have artifact-native simulation, hard problems become tractable:

Incremental database updates. When geometry changes, the system knows which results are stale and which remain valid. You re-run the affected subset, not everything. "Do I really have to re-run the entire databank?" - you can see what artifacts you've placed in plots (as live links) have become stale (and the plots themselves will highlight this if you wish.)

Sensitivity analysis as a query. If the geometry → mesh → CFD → results chain is explicit, you can differentiate through it. "Which design parameters influence Cm_alpha the most?" becomes a query, not a PhD thesis.

Collaboration with context. Comments and conversations attach to specific artifacts, not floating in Slack. When you look at a result, you see who ran it, why, and what the team said about it. This matters because decisions live in conversations — the reasoning behind a design choice is as important as the choice itself.

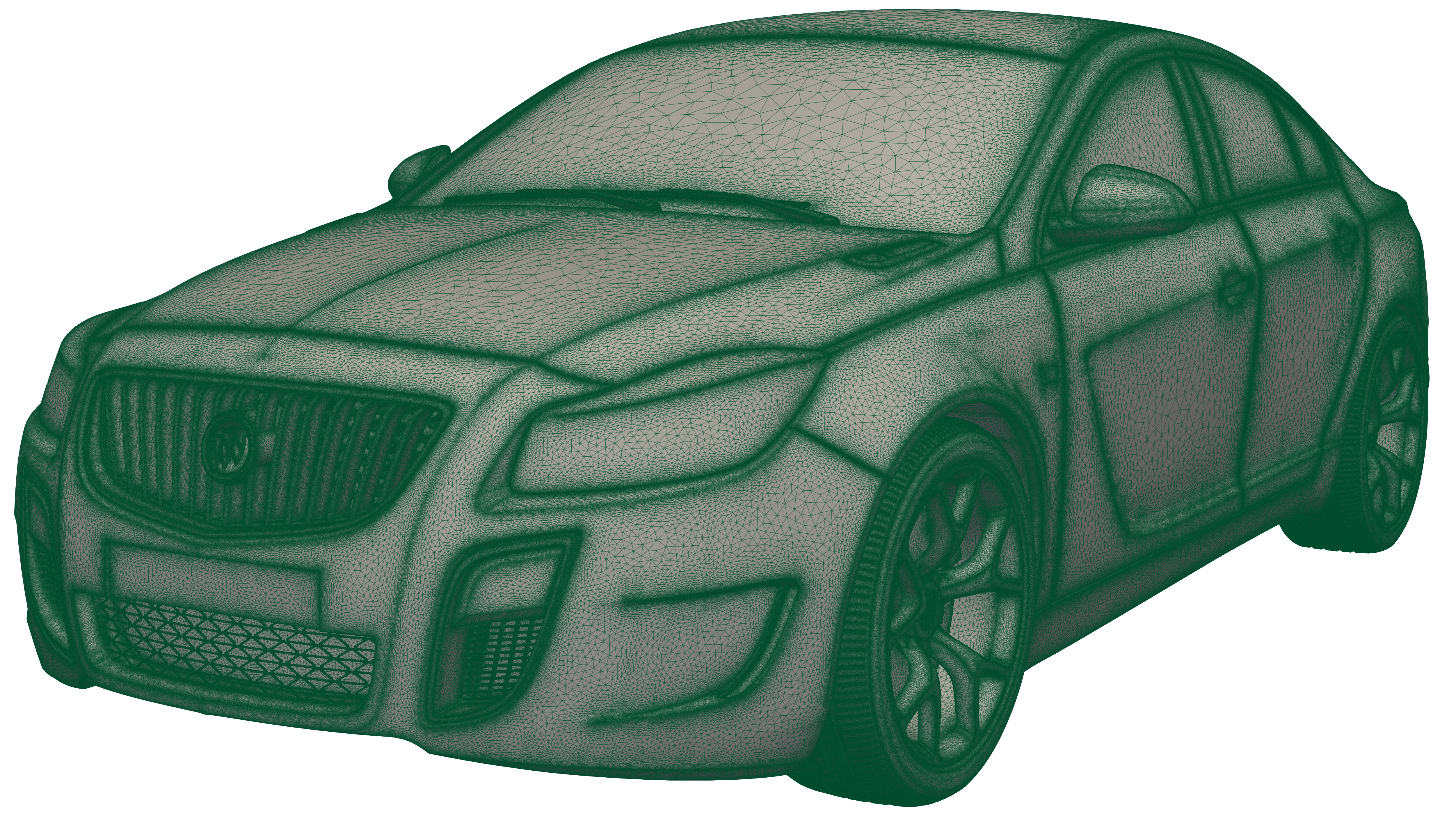

Format-agnostic geometry ingestion. Bring your geometry in whatever form you have it. OpenVSP? STEP? IGES? Parasolid? Thread handles the conversion and tracks the lineage. Your conceptual design team can work in OpenVSP while your detailed design team works in CATIA — and everything stays connected.

Certification-ready audit trails. When the DER asks where a number came from, you click "show lineage" and the answer is right there — timestamped, immutable, complete. NASA-STD-7009B compliance becomes a byproduct of normal work, not a separate documentation exercise.

Access for non-CFD engineers. The control engineer who needs hinge moments can query the database directly. If the data exists, they get it. If there's a gap (in a plot of anything vs anything), they click it and queue a run and see the result in minutes.

Live plots, not dead PNGs. When you create a plot in Flexcompute Thread, it becomes an artifact — shareable, traceable, alive. You can still export to PNG if you really want to (old habits die hard), but you can also share the plot itself as a live web link. Your colleagues see it exactly as you intended: same data, same axes, same context. If they want to explore — zoom in, change the alpha range, toggle a data series — they can. Their changes are temporary; your plot stays intact. And if they discover something worth keeping, they save it as a new derived plot, with lineage back to yours. No more "which version of this chart is current?" — the chart is the version.

And because everything is an artifact with queryable metadata, you can plot anything against anything. Rolling moment vs roll rate? Obviously. But also: CL vs time-of-day the run completed. Residual convergence vs mesher version. CD vs "runs by Sarah". 3D surfaces, histograms, scatter matrices — if the data exists, you can visualise it. The system doesn't care whether you're plotting physics or process; it's all just artifacts with properties.

And here's a sneak peak of the other stuff we're folding in:A multitude of geometry version control - including VSP, ESP, and whatever CAD you wish to import:

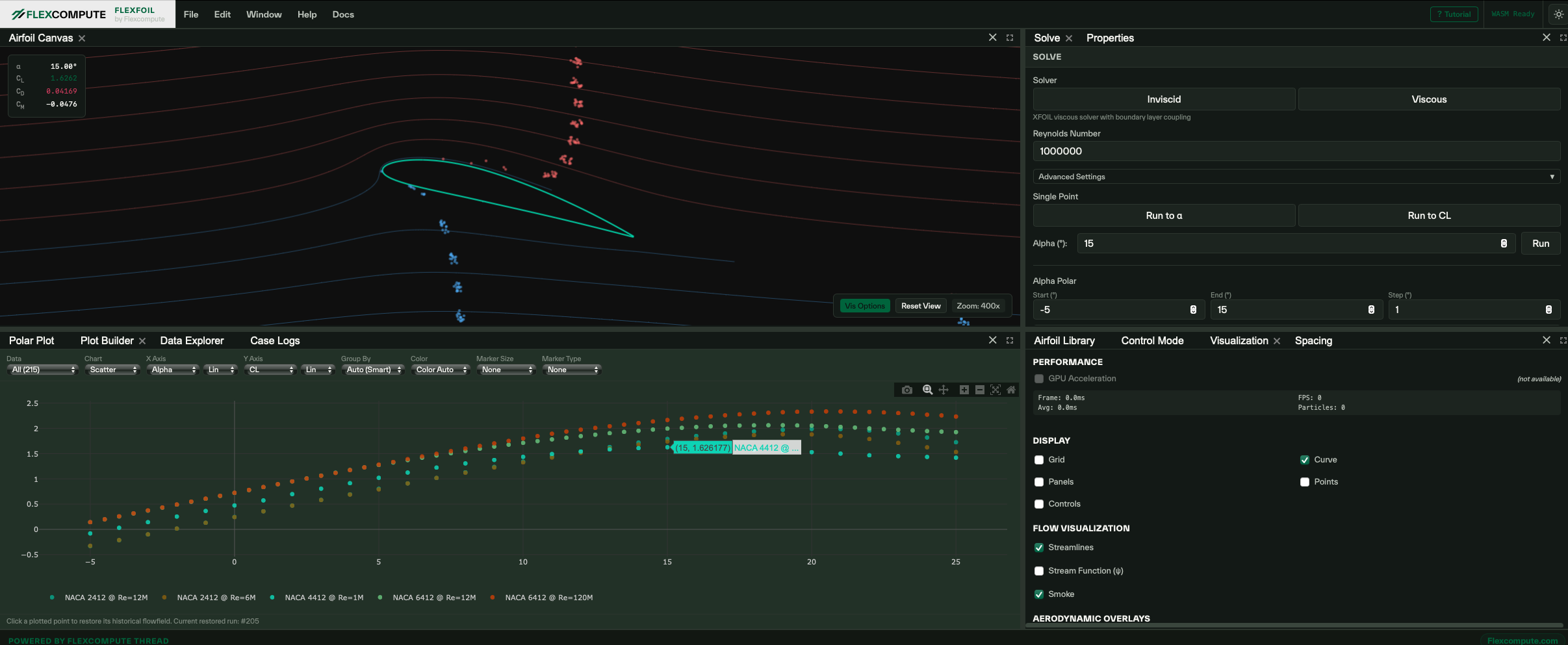

Reduced order methods from 3D vortex codes down to a browser-based, easy-to-play with XFOIL-like code (thank you to Mark for blessing this one):

If this resonates — if you've felt the pain of file-based simulation and wondered why nobody's fixed it — I'd genuinely like to talk.

Not a sales pitch (I'm an engineer, I'm bad at sales). Just a conversation about what you actually need, and whether we're building the right thing.

Addendum: On Q-Criterion:

A reader pointed out that the above might read as dismissive of Q-criterion. Fair play — I am having a bit of a spicy poke at a lot of LinkedIn posts that show amazing flows with zero relation to physics insight or design criteria. Every CFD vendor's marketing deck features the same gorgeous isosurface renders, as if the primary output of computational aerodynamics is desktop wallpaper. But...Q-criterion itself? I've used it - I've sat in ParaView tracing rotor wake impingement onto H-tails, trying to figure out why the ADB was doing something unexpected. So it is useful and I was being hyperbolic.

That said; that's not what most CFD gets used for.

On production vehicle programmes, the bulk of the compute goes to ADB development — systematic sweeps across the flight envelope, generating integrated coefficients for downstream disciplines. Those surgical flow diagnostic exercises? They're important, but they're a small fraction of the total effort. And more to the point, we often don't know where to look until the integrated data tells us something is wrong.

Imagine you see a suspicious nonlinearity in Cm-alpha — a pitching moment break that shouldn't be there. But you're looking at your MATLAB/Python/Excel wrappers/interpreters on CSVs from an ADB delivery, not a ParaView session. To understand what's causing it, you need to email the aerodynamicist, who needs to figure out which of the hundreds of solutions corresponds to that flight condition, then fire up their visualisation tool. By the time anyone looks at the actual flow, the design has often moved on - or it causes costly program delay that makes everyone unhappy and stressed.

That disconnect is the problem. Not Q-criterion itself — the lack of joined-upness.

Thread fixes this. When you see an anomaly in your polar, you right click it — and you have the option to open the volume solution in a new tab. Flow360 supports RANS and DES, so you can run the same conditions at different fidelity levels and see where the integrated coefficients diverge. When RANS and DES disagree on Cm at high alpha, you compare the volume solutions side-by-side to see what's being resolved in one and dissipated in the other. No hunting through folders. No "which run was that again?" The curves tell you where to look; Thread shows you what to look at.

Harry Smith is Director of Flight Sciences at Flexcompute. His path here was not linear: he's been a professor, a wind tunnel engineer, worked in sustainability, and — in a previous life — a police officer in the UK. Somewhere along the way he became the person waiting for CFD results instead of generating them, and started wondering why the workflows hadn't improved since the 1990s. Now he's doing something about it.

.png)

What Reddit Reveals About CFD: The Problem in Front of Every Simulation

SUBSCRIBE TO RECEIVE

UPDATES

Stay up-to-date with the latest news and thought leadership in

multiphysics simulation technology.